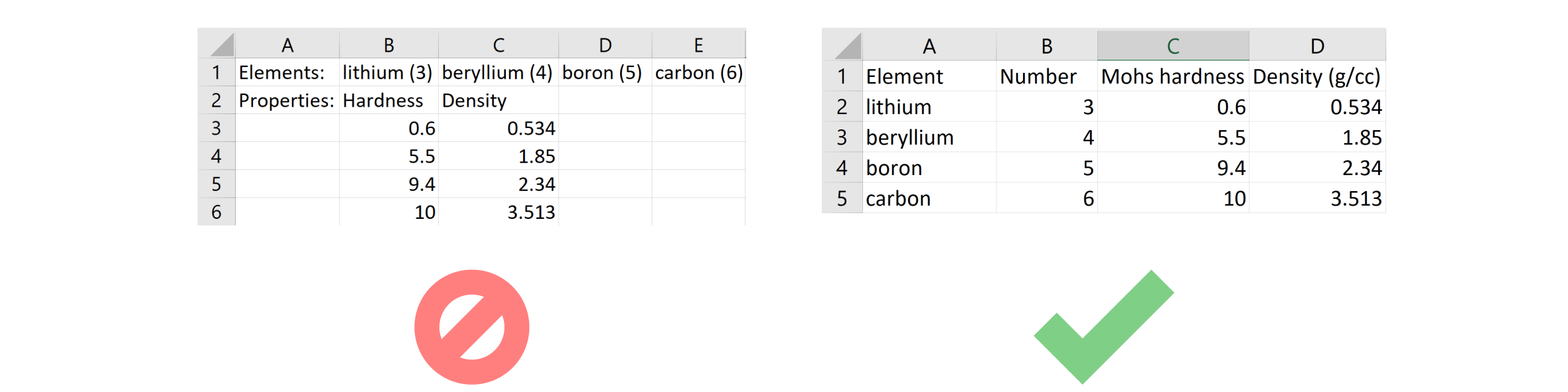

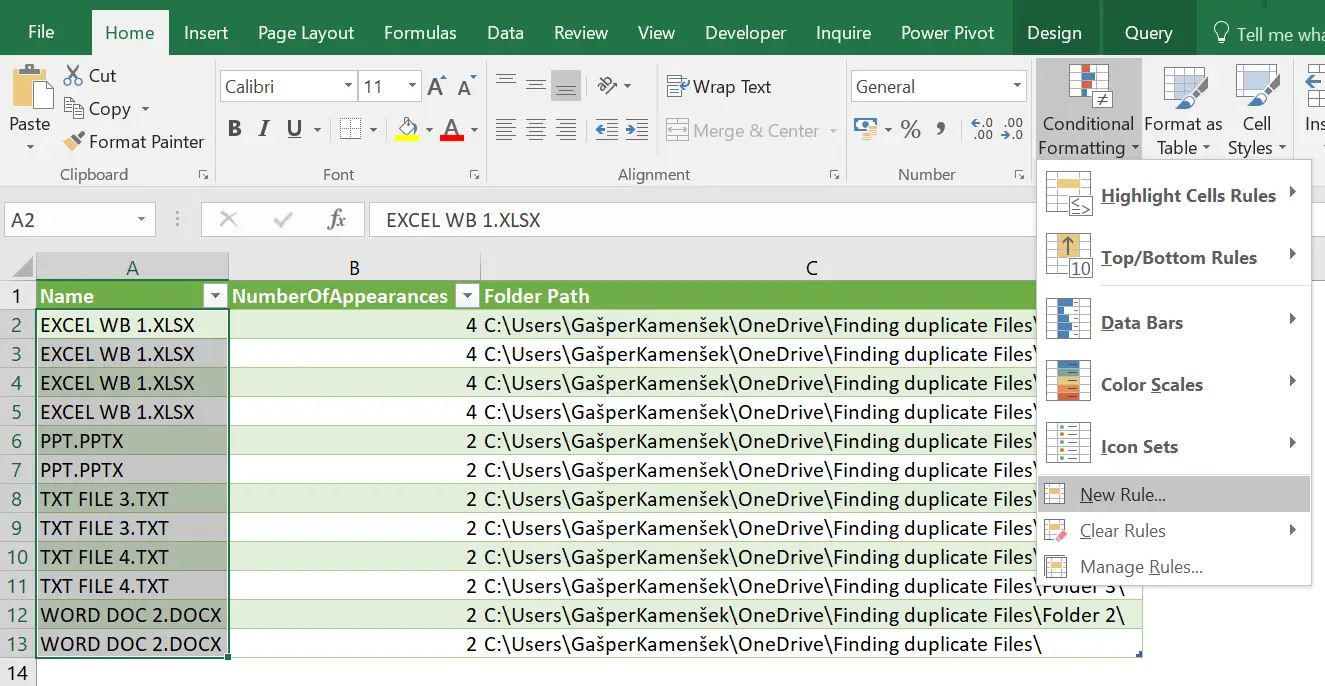

Text="What is the average math score for male students?" # Function to execute SQL query on SQLite database Prompt = """Please regard the following data:\n \nSQL Query:""".format(table_name,columns,text) Input_text='''What is the average math score for male students?''' The API will then extract the relevant information from the data and provide it in the response. This can be done by reading the tabular data from a CSV file, preparing the input for the API, and passing it along with the input text. You can extract information by providing the tabular data and input text to the ChatGPT API. Read_csv=pd.read_csv("Student.csv") Step 2: Use the ChatGPT APIīefore we begin utilizing the ChatGPT API, please make sure that you have installed OpenAI Python library in your system. We have stored our tabular data in a CSV file, you can read the CSV file using “Pandas” Python library and pass the data to the ChatGPT API for information extraction. Here’s an example of how you can utilize the ChatGPT API to extract information from tabular data: Step 1: Prepare Input It can analyze the text-based input provided by the user, interpret the query, and generate a response based on the content of the tabular data.

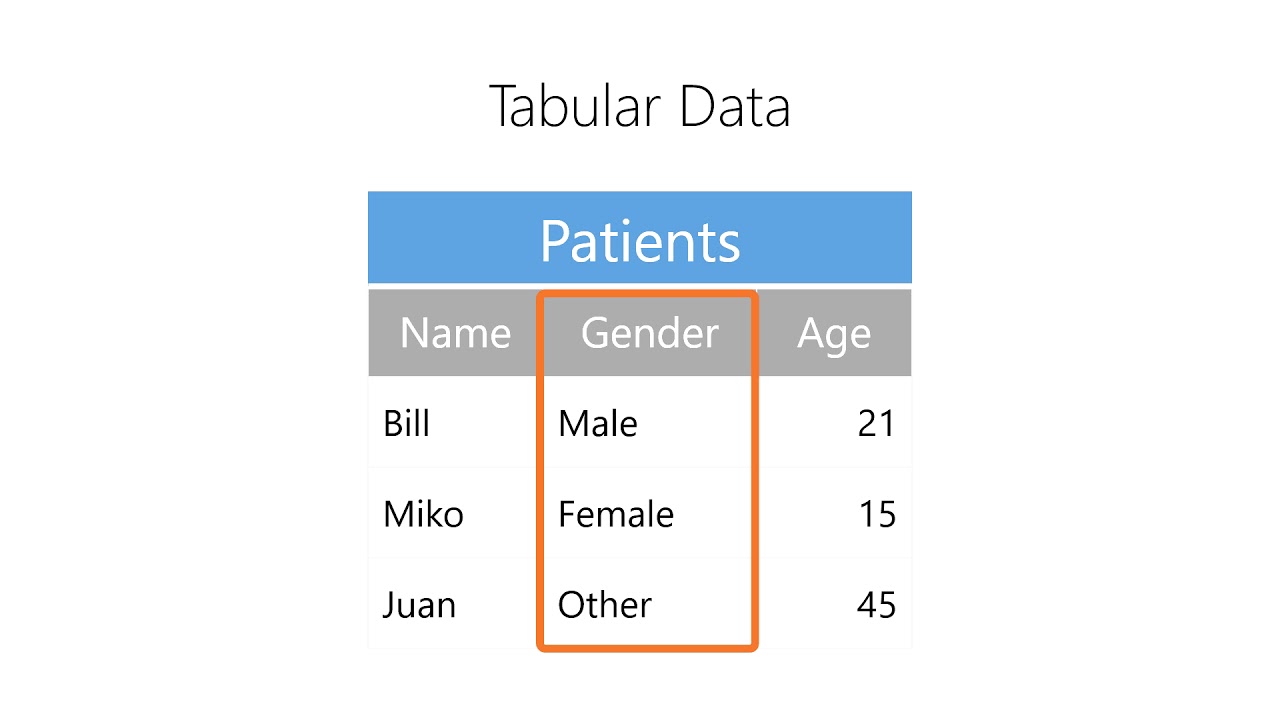

genderĬhatGPT relies solely on natural language processing (NLP) techniques to understand and extract information from tabular data. Please see the data provided below, which will be used for the purpose of this blog. Note that we have only taken into account 30 records from the dataset. These data are taken from the following: Datasetįor all illustrations in this post, We will be utilizing the following data. This blog describes you the process of extracting useful information from tabular data using ChatGPT API. OpenAI announced an official API for ChatGPT which is powered by gpt-3.5-turbo, OpenAI’s most advanced language model. The emergence of advanced language models such as ChatGPT has introduced a promising and innovative approach to extracting useful information from tabular data. Conventional approaches typically require manual exploration and analysis of data, which can be requires a significant amount of effort, time, or workforce to complete. KmsConnectionConfig()Ĭonfiguration of the connection to the Key Management Service (KMS)ĮncryptionConfiguration(footer_key)Ĭonfiguration of the encryption, such as which columns to encryptĭecryptionConfiguration()Ĭonfiguration of the decryption, such as cache timeout.In the world of data analysis, extracting useful information from tabular data can be a difficult task. The abstract base class for KmsClient implementations. Statistics for a single column in a single row group.Ī factory that produces the low-level FileEncryptionProperties and FileDecryptionProperties objects, from the high-level parameters. Wrapper around dataset.write_dataset (when use_legacy_dataset=False) or parquet.write_table (when use_legacy_dataset=True) for writing a Table to Parquet format by partitions. Write metadata-only Parquet file from schema. Read effective Arrow schema from Parquet file metadata. Read a Table from Parquet format, also reading DataFrame index values if known in the file metadata Read FileMetaData from footer of a single Parquet file. ParquetWriter(where, schema)Ĭlass for incrementally building a Parquet file for Arrow tables. Reader interface for a single Parquet file. ParquetDataset()Įncapsulates details of reading a complete Parquet dataset possibly consisting of multiple files and partitions in subdirectories.

Write a pandas.DataFrame to Feather format. Read a pandas.DataFrame from Feather format. InvalidRow(expected_columns, actual_columns, .)ĭescription of an invalid row in a CSV file. Write record batch or table to a CSV file. Reading and Writing the Apache Parquet FormatĬonvertOptions()Īn object that reads record batches incrementally from a CSV file.Ī special object indicating ISO-8601 parsing. Reading and Writing the Apache ORC Format

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed